Gallant Lab Natural Short Clips 3T fMRI Data

Summary

This data set contains BOLD fMRI responses in human subjects viewing a set of

natural short movie clips. The functional data were collected in five subjects,

in three sessions over three separate days for each subject. Details of the

experiment are described in the original publication [1].

The natural short movie clips used in this dataset are identical to those used

in a previous experiment described in [2]. However, the functional data is different.

This data set contains full brain responses recorded every two seconds with a 3T scanner [1].

The data set described in [2] contains responses from the occipital lobe only,

recorded every second with a 4T scanner.

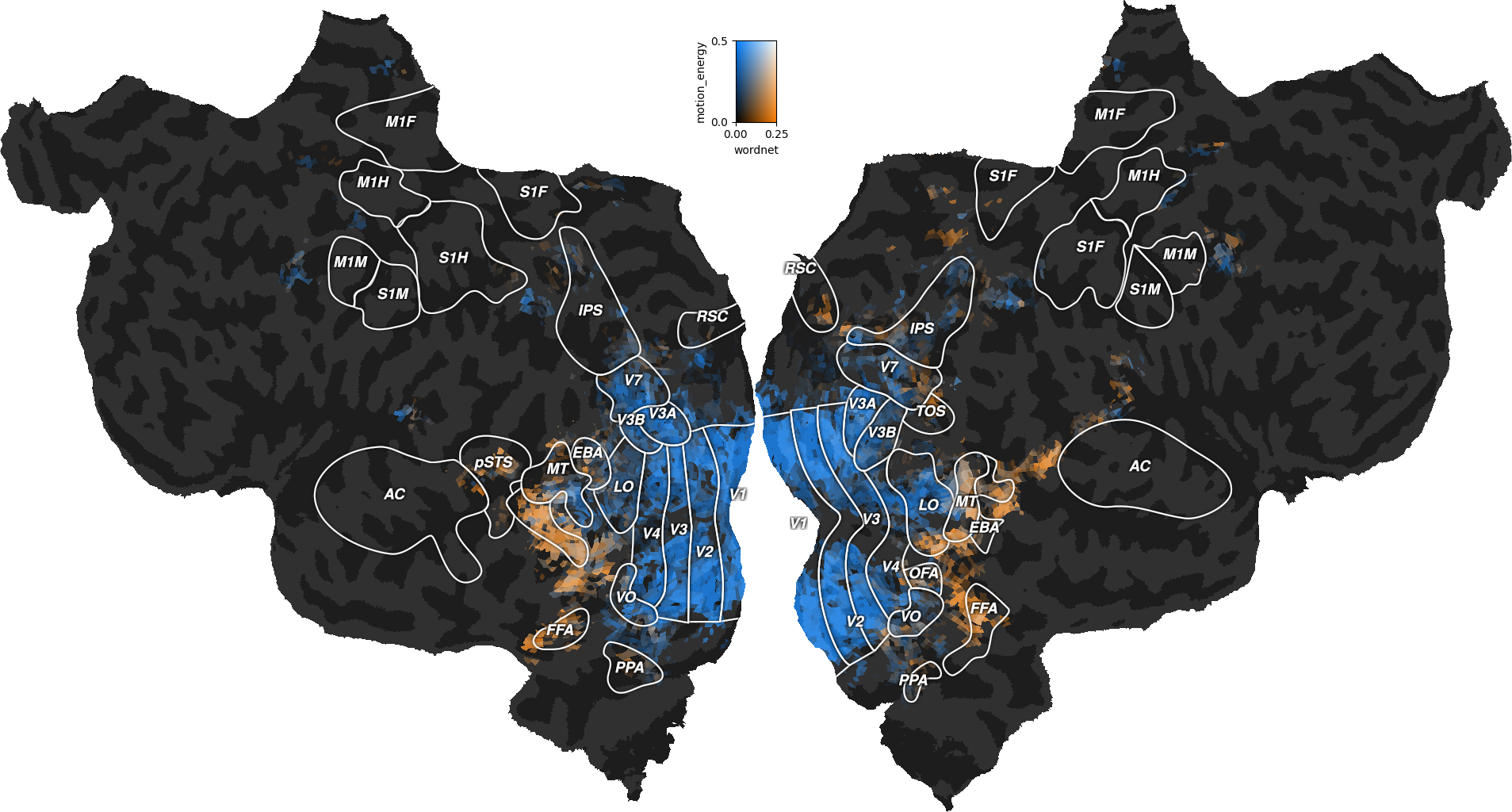

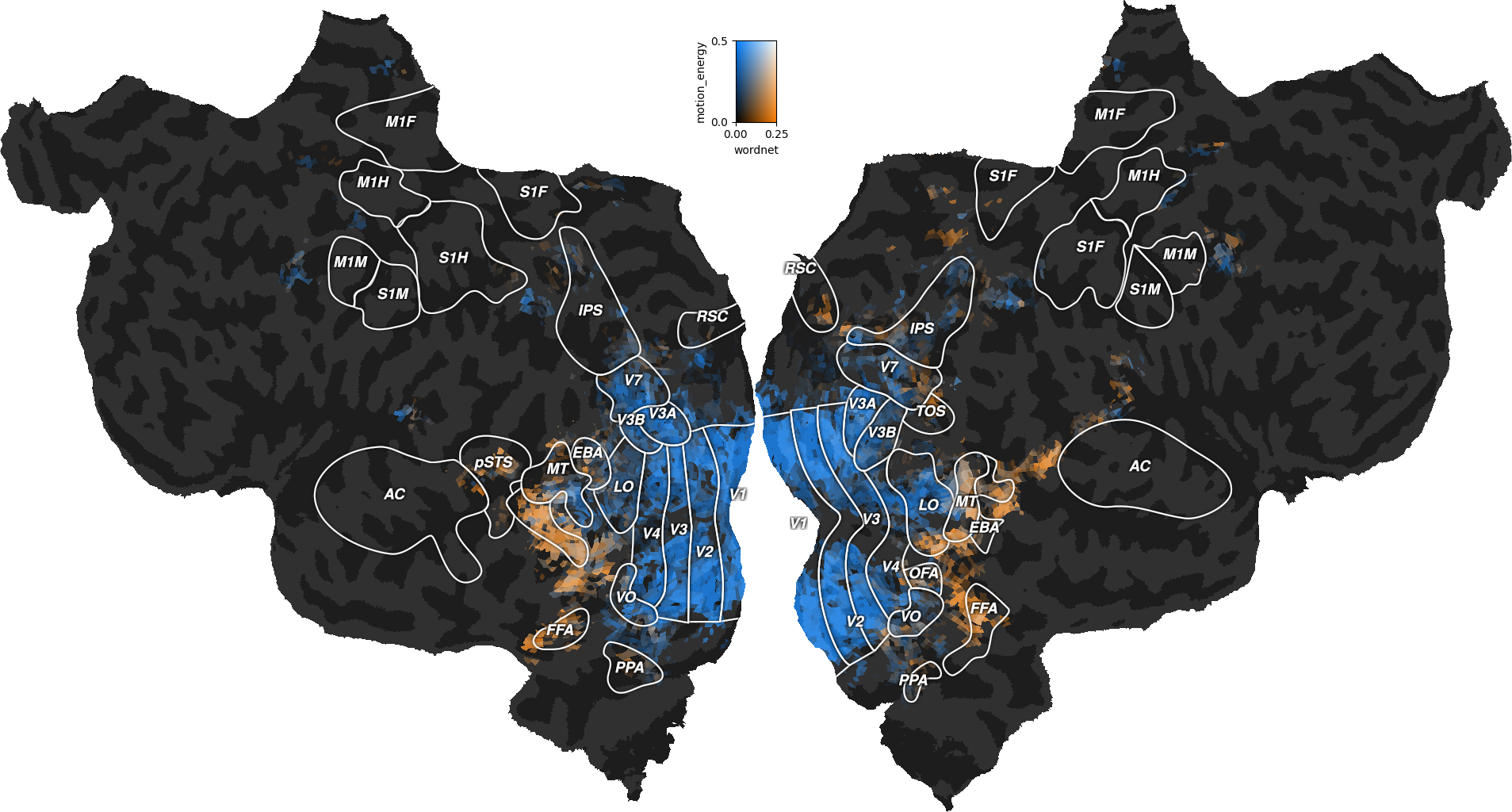

[1] Huth, Alexander G., Nishimoto, S., Vu, A. T., & Gallant, J. L. (2012).

A continuous semantic space describes the representation of thousands of object

and action categories across the human brain. Neuron, 76(6), 1210-1224.

[2] Nishimoto, S., Vu, A. T., Naselaris, T., Benjamini, Y., Yu, B., & Gallant,

J. L. (2011). Reconstructing visual experiences from brain activity evoked by

natural movies. Current Biology, 21(19), 1641-1646.

Cite this dataset

If you publish any work using the data, please cite the original publication [1],

and cite the dataset in the following recommended format:

[3] Huth, A. G., Nishimoto, S., Vu, A. T., Dupre la Tour, T., & Gallant, J. L. (2022).

Gallant Lab Natural Short Clips 3T fMRI Data. http://dx.doi.org/--TBD--

Data files organization

features/ → feature spaces used for voxelwise modeling

motion_energy.hdf → visual motion energy, as described in [2]

wordnet.hdf → visual semantic labels, as described in [1]

mappers/ → plotting mappers for each subject

S01_mapper.hdf

...

S05_mapper.hdf

responses/ → functional responses for each subject

S01_responses.hdf

...

S05_responses.hdf

stimuli/ → natural movie stimuli, for each fMRI run

test.hdf

train_00.hdf

...

train_11.hdf

utils/

example.py → Python functions to analyze the data

wordnet_categories.txt → names of the wordnet labels

wordnet_graph.dot → wordnet graph to plot as in [1]

Data format

All files are hdf5 files, with multiple arrays stored inside.

The names, shapes, and descriptions of each array are listed below.

Each file in `features` contains:

X_train: array of shape (3600, n_features)

Training features.

X_test: array of shape (270, n_features)

Testing features.

run_onsets: array of shape (12, )

Indices of each run onset.

where (n_features = 6555) for `motion_energy.hdf`

and (n_features = 1705) for `wordnet.hdf`.

Each file in `mappers` contains:

voxel_to_flatmap: CSR sparse array of shape (n_pixels, n_voxels)

Mapper from voxels to flatmap image. The sparse array is stored with

four dense arrays: (data, indices, indptr, shape).

voxel_to_fsaverage: CSR sparse array of shape (n_vertices, n_voxels)

Mapper from voxels to FreeSurfer surface. The sparse array is stored

with four dense arrays: (data, indices, indptr, shape).

flatmap_mask: array of shape (width, height)

Pixels of the flatmap image associated with a voxel.

flatmap_rois: array of shape (width, height, 4)

Transparent image with annotated ROIs (for subjects S01, S02, and S03).

flatmap_curvature: array of shape (width, height)

Transparent image with binarized curvature to locate sulci/gyri.

roi_mask_xxx: array of shape (n_voxels, )

Mask indicating which voxels are in the ROI `xxx`.

ROI list is different on each subject. SO4 and S05 have no ROIs.

Each file in `responses` contains:

Y_train: array of shape (3600, n_voxels)

Training responses.

Y_test: array of shape (270, n_voxels)

Testing responses.

run_onsets: array of shape (12, )

Indices of each run onset.

Each file in `stimuli` contains:

stimuli: array of shape (n_images, 512, 512, 3)

Each training run contains 9000 images total.

The test run contains 8100 images total.

The utils.py file contains helpers to load the data in Python.

How to get started

The utils directory contains basic Python helpers to get started with

the data.

More tutorials on voxelwise modeling using this data set are available at

https://github.com/gallantlab/voxelwise_tutorials.

They includes Python downloading tools, data loaders,

plotting tools, and examples of analysis.

Note that to get started, you might not need to download all the data. In

particular, the stimuli data is large, and is already processed into two

feature spaces to be used in voxelwise modeling.

How to get help

The recommended way to ask questions is in the issue tracker on the GitHub page

https://github.com/gallantlab/voxelwise_tutorials/issues.